artificial intelligence

Data owners frequently hesitate to share data with Machine Learning developers due to concerns about leaks, theft, illegal use, and protecting trade secrets

By 2025

80%

of the largest global organizations will have participated at least once in Federated Machine Learning to create more accurate, secure, and environmentally sustainable models.

60%

of large organizations will use Privacy-Enhancing Computation techniques to protect privacy in untrusted environments or for analytics purposes.

Ensuring data remains accessible only to the owner during transfer and processing, preventing access to its original or interpretable form by others

Ideal for those working with Machine Learning and Sensitive Data

Protecting data at all stages of ML development, from data transfer to model training and algorithm application

Compatible with a wide range of popular Data Types and ML Architectures

Secure transmission over the network

Secure storage

Training on secure data

A possible additional component - obtaining the network's response in a secure manner

Inference on secure data

Meet internal security and compliance standards to prevent project interruptions

Benefit

Enhance ML model quality

with secure data integration

Benefit

Monetize

datasets safely

Benefit

Stand out by ensuring

data confidentiality

Benefit

Comply with data

security regulations

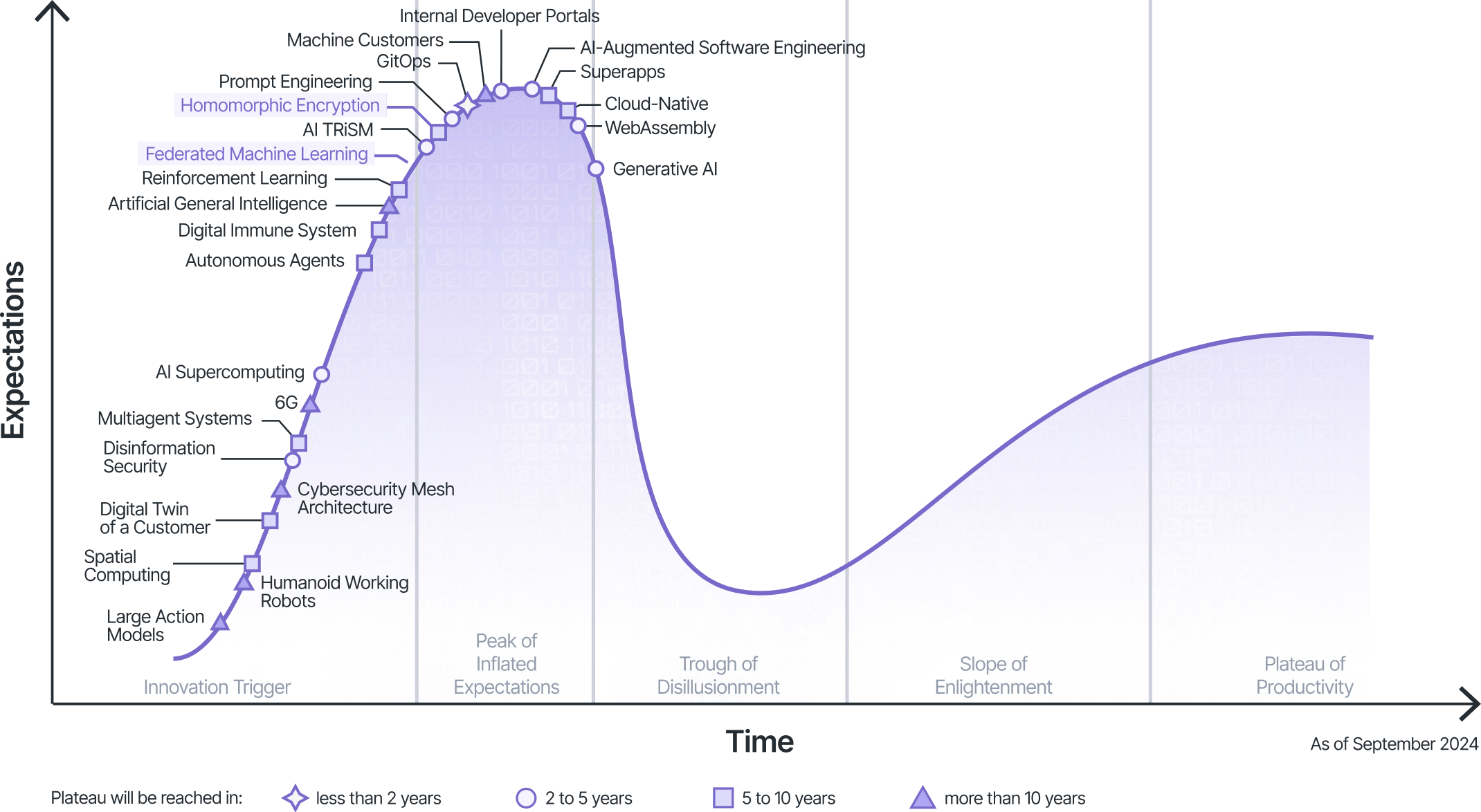

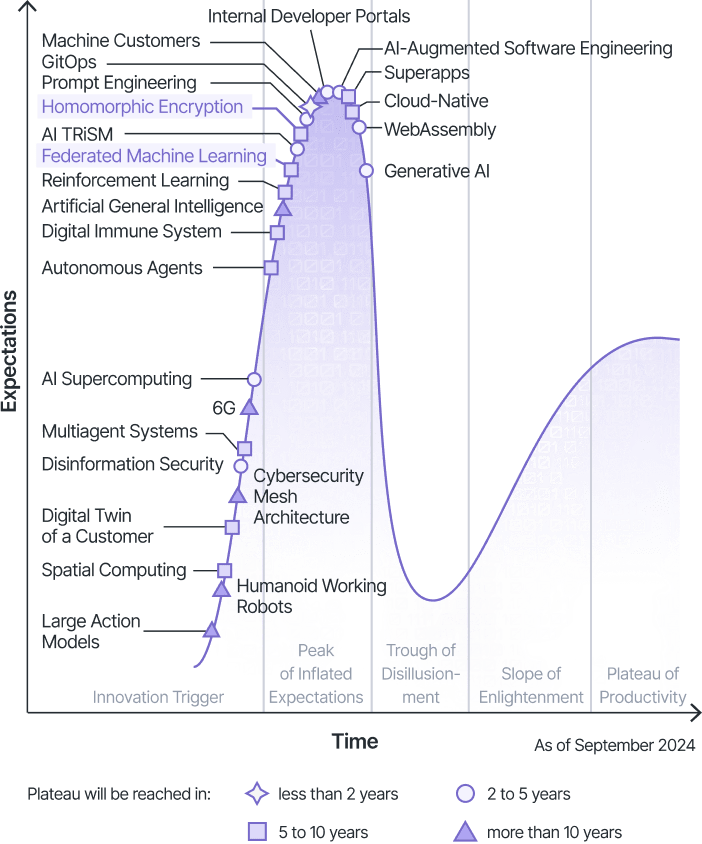

Federated Learning

Building a Collective Brain from Scattered Thoughts.

FL allows multiple parties to contribute information to train a machine learning model, all while keeping their original data private.

It's like a collaborative brainstorming session where everyone contributes ideas without revealing their thought process.

Fully Homomorphic Encryption

Secure Data Processing without Decryption.

Imagine a world where your data remains encrypted even while it's being processed, analyzed, and shared.

FHE makes this a reality, enabling unprecedented data security and privacy.

Differential Privacy

Limits data leakage by adding random noise.